Catriona Matthews, Jake Hilliard, and James Openshaw ~ Learning Designers

Earlier this year, we proudly shared a blog post introducing the updated ICEBERG principles – a set of seven evidence-based learning design principles that positively impact student retention. Originally created in 2016, the principles were updated across 2023-2024 to reflect new research, student insights, and evolving practices at The Open University (OU).

One of the core foundations of our learning design practice is ensuring that our work is based on robust and up-to-date evidence. In this follow-up post, we want to share a behind-the-scenes look at the detailed, and incredibly rewarding, process that went into updating the ICEBERG principles.

Teamwork makes the dream work

Whilst updating the ICEBERG principles we worked with colleagues based in the Institute of Education (IET). IET research innovative technologies and educational development approaches to understand ways to support learning, teaching and assessment. By combining their research skills with our practical knowledge of learning design and use of the principles within the OU, we were able to create a superstar ICEBERG reviewing team.

The plan

We set ourselves several goals:

- Gather evidence of the impact of the ICEBERG principles.

- Identify any challenges to the ICEBERG principles e.g. evidence that indicates a principle may have an adverse rather than positive effect on retention.

- Explore whether there are any other ways of increasing retention through learning design which the ICEBERG principles don’t currently capture.

To do this we embarked on a series of literature reviews and a survey of OU students’ experiences of withdrawing or pausing their studies.

Search, read and repeat

If you have ever undertaken a literature review, you’ll know that it can be a gargantuan and often overwhelming task. To help us keep things manageable we decided to split the task in two.

- Internal review – We identified and reviewed OU-specific reports and publications since 2016, which either focused on retention or had links to the ICEBERG principles.

- External review – We analysed external papers identified through citations of the original ICEBERG report and through targeted keyword searches in academic databases.

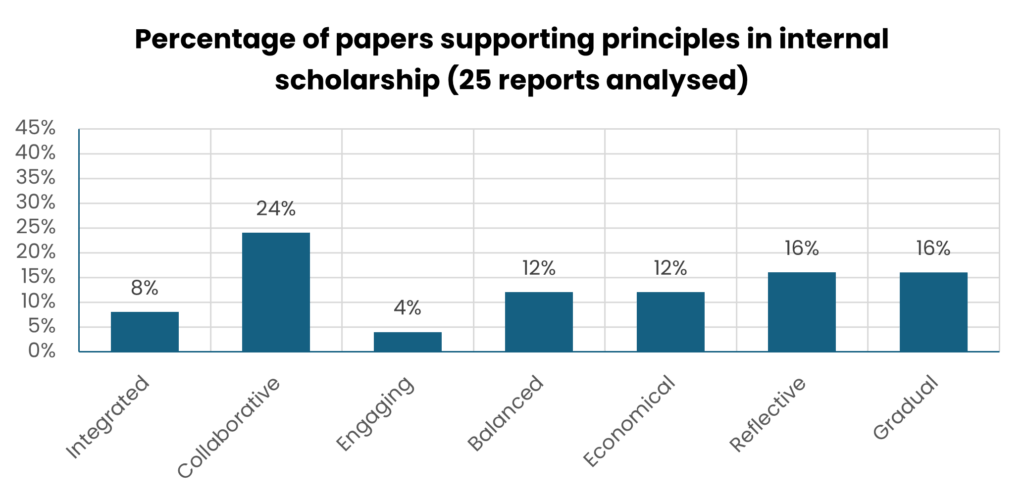

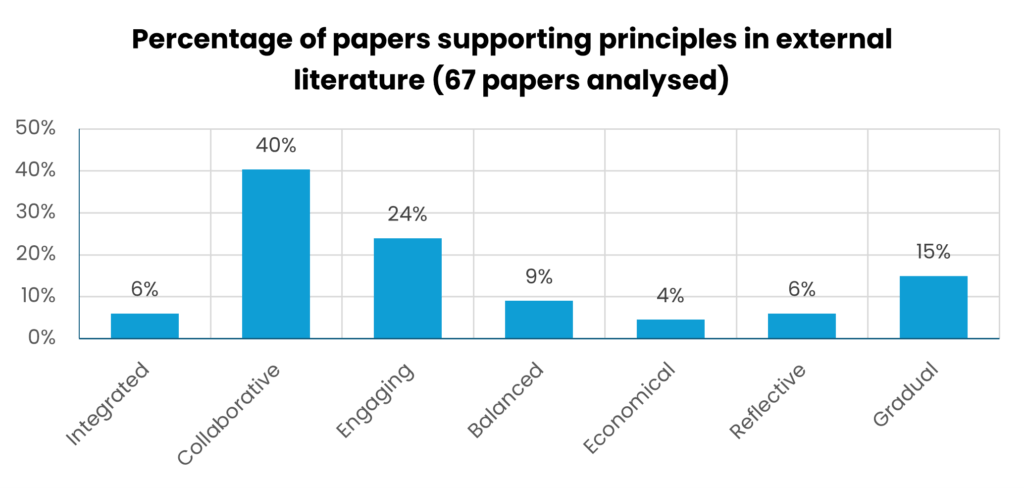

All in all, 92 reports and papers were analysed, their connection (or not!) with the ICEBERG principles logged, and any new tips noted down. The findings revealed strong support for most of the principles while also highlighting areas for refinement, such as skills development across qualifications, assessment feedback, and embedding opportunities for reflection into curriculum design.

The graphs (Figures 1 &2) below show the percentage of internal and external studies supporting one or more of the 2016 ICEBERG principles.

Listening to student voices

To complement the literature review, we also surveyed the OU’s Curriculum Design Student Panel (CDSP). The CDSP is a voluntary group of students who contribute to curriculum design by completing surveys, testing curriculum content and interactive tools, providing feedback on prototypes, and sharing reflections on their past study experiences.

We asked the panel (made up of 1827 students at the time) about their experiences of withdrawing from or pausing their studies. As well as reviewing the quantitative data from the 121 students who responded, we also thematically analysed the hundreds of qualitative comments using a six-phase process defined by some rather clever folk called Braun and Clarke (2006).

The themed responses were reviewed against the 2016 ICEBERG principles and design tips and grouped into overarching categories of ‘institutional’, ‘situational’ and ‘dispositional’. This helped us to evaluate causality and identify connections with learning and module design. The feedback corroborated findings from the literature review and provided strong support and evidence of the impact and importance of the principles. It also highlighted additional areas for consideration such as assessment guidance and feedback, module community and workload visibility.

Turning data into reality

From the mass of data we collected from the literature and our generous student volunteers we learnt an awful lot. Reassuringly we found plenty of evidence which confirmed that the seven principles, although in need of some tweaking were very much still relevant and important.

We then had the tricky job of trying to update the principles and design tips whilst keeping them as short and succinct as we could. This involved taking our findings, debating which principle they fitted within, and then plenty of writing, rewriting and editing.

During this process we wanted to ensure the principles aligned with and complemented OU strategies relating to sustainability, teaching and learning, inclusivity and access, participation, and progress. Colleagues in various sustainability and Equality, Diversity, Inclusion and Accessibility working groups were generous with their time and feedback, reviewing our proposed changes and utilising their vast expertise to ensure we didn’t miss anything important.

Our last job, once the principles and design tips were agreed and polished was to create a series of resources to share the principles and help colleagues to embed them in their practice. This included an ‘ICEBERG health check’ tool and a ‘quick guide’ to implementing the ICEBERG principles.

Fin (for now)!

We hope you enjoyed this insight into the work that went into updating the framework. Did you expect it to be such a lengthy and detailed process?

If you use the ICEBERG principles or any of the resources shared here, we would love to hear from you. You can also get in touch if you would like to chat to the Learning Design team about any retention related support you’d find useful for your organisations, whether that’s running a session for you, helping you to use some of the resources we’ve shared via this blog, or simply for a bit of advice. We would love to hear from you.

Please contact us at: [email protected].

References:

Braun, V., & Clarke, V. (2006). Using Thematic Analysis in Psychology. Qualitative Research in Psychology, 3(2), 77–101. https://doi.org/10.1191/1478088706qp063oa

Banner image: Aruizhu via Adobe Stock