In describing our use of (simple) PMatch for answer matching for short-answer free-text questions, I may have made it sound too simple. I’ll give two examples of the sorts of things you need to consider:

In describing our use of (simple) PMatch for answer matching for short-answer free-text questions, I may have made it sound too simple. I’ll give two examples of the sorts of things you need to consider:

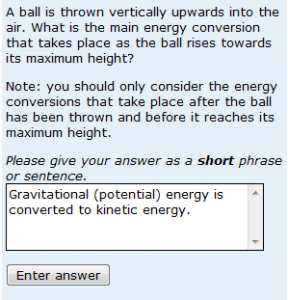

Firstly, consider the question shown on the left. I’m not going to say whether the answer given is correct or incorrect, but note that the answer ‘Kinetic energy is converted to gravitational (potential) energy’ includes exactly the same words – and responses of both types are commonly received from real students. So word order matters.

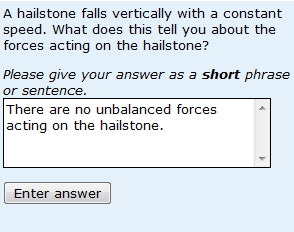

The other thing to take care with is negatives. As I’ve said before, it isn’t that students are trying to trick the system. However responses that would be correct were it not for the fact that they contain the word ‘not’ are suprisingly common. So answer matching needs to be able to deal with negation.

Also note that students are prone to give their answers in the form of sentences containing double negatives, as in the example shown on the right.

Also note that students are prone to give their answers in the form of sentences containing double negatives, as in the example shown on the right.

I’d like to emphasise – again – the importance of using real student responses in developing answer matching for questions of this type. I say that because it is our inspection of actual student responses that has shown us the sorts of responses that our students give. Your students may give totally different responses.

In developing answer matching I have three times made single omissions that resulted in a lot of responses being ‘missed’. Firstly I forgot about a double negative. Secondly I missed a simple synonym (and as a result I missed 40% of student responses – students, in their droves, gave an answer that neither I nor their tutors had thought of). My final mistake was to accept ‘crystallise’ whilst overlooking ‘crystallize’. This problem was actually caused by a limitation in the software that we were using at the time (in not automatically allowing – or at least suggesting – UK and US spellings). However it leads to a final recommendation for good question design – don’t try to make your answer matching too tight. For example, if you want ‘sulfur’ to be spelt ‘sulfur’ not ‘sulphur’ (or vice versa) that’s your choice. However students will use the alternative spelling, so in making this sort of decision you need to be able to justify it in terms of the learning outcome the question is designed to assess – and be to prepared for a variant of a question that requires students to be able to spell ‘sulfur’ to be considerably less well scoring than one that is about a different element.