I am in a (very brief) lull between the Assessment in Higher Education Conference, CAA 2013, masses of work in my ‘day job’ and a determination to both carry on writing papers and to get some time off for walking and my walking website. The Assessment in Higher Education Conference was great and, hopefully before CAA 2013 starts on Tuesday, I will post about some of the things I learned. However first, I’d like to reflect on something completely different.

I am in a (very brief) lull between the Assessment in Higher Education Conference, CAA 2013, masses of work in my ‘day job’ and a determination to both carry on writing papers and to get some time off for walking and my walking website. The Assessment in Higher Education Conference was great and, hopefully before CAA 2013 starts on Tuesday, I will post about some of the things I learned. However first, I’d like to reflect on something completely different.

During the week I was at an Award Board for a new OU module. All did not run smoothly. The module requires students to demonstrate ‘satisfactory participation’, but we’d used a horrible manual process to record this. Not surprisingly, errors crept in. Now the OU Exams procedures are pretty robust and the problem was ‘caught’. We stopped the Award Board, all the data were re-entered and checked, checked and checked again and we reconvened later in the week – and brought the board to a satisfactory conclusion. My point is that people make mistakes.

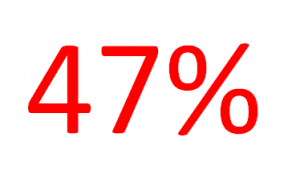

Next I would like to reflect on the degree results of one of my young relations at a UK Russell Group University. She got a 2:1, which was what she was aiming for, but was devastated that she ‘only’ got 64%, not the 67% she was aiming for. Now this is in a humanities subject – how on Earth can you actually distinguish numerically to that level of precision?

My general point is that, given that humans make mistakes – and even if they don’t their marking is pretty subjective – why do we persist in putting such faith in precise MARKS. It just doesn’t add up. I am pretty confident that, at our Award Board, we made the right decisions at the distinction/pass/fail boundaries and I am similarly confident that my young relative’s degree classification is what was demonstrably achieved. I’d reassure those of you who have never sat on a Award Board that considerable care is taken to make sure that this is the case. However, at a finer level, can we be sure about the exact percentage? I don’t think so.