I’ve written quite a lot previously about what you can learn about student misunderstandings and student engagement by looking at their use of computer-marked assiggnments. See my posts under ‘question analysis’ and ‘student engagement’.

Recently, I had cause to take this slightly further. We have two interactive computer-marked assignments (iCMAs) that test the same material, and that are known to be of very similar difficulty. Some of the questions in the two assignments are exactly the same, most are slightly different. But when we see very different patterns of use, this can be attributed to the fact that the two iCMAs are used on different modules, with very different student populations.

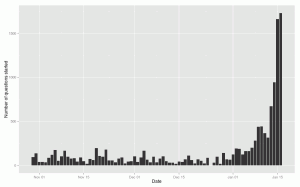

Compare the two figures shown below. These simply show the number of questions started (by all students) on each day that the assignment is open. The first figure shows a situation where most questions are not started until close to the cut-off date. The students are behind, struggling and driven by the due-date (I know some of these things from other evidence).

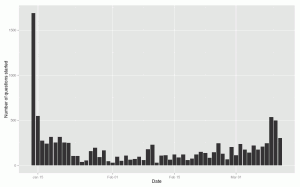

The second figure shows a situation in which most questions are started as soon as the iCMA opens – the students are ready and waiting! These students do better by various measures – more on this to follow.

Pingback: e-assessment (f)or learning » Blog Archive » Same assignment, different students 2

Our interactive tests on management entrance exam can help you a lot. The GMAT Cheat Sheet is an excellent way of solving difficult problems quickly. You will be able to remember mathematical formula easily.

Read more