At CAA 2012 I gave a paper with the title ‘Short-answer e-assessment questions : five years on’ in which I discussed OU work in this area. There was a lot of interest in what I said, especially concerning evaluation findings. However I wanted to get a discussion going on the reasons why more people don’t use assessment items of this type, and this didn’t really happen. So I’m trying again here. (My CAA 2012 paper is at Open Research Online if you want more background information.)

At CAA 2012 I gave a paper with the title ‘Short-answer e-assessment questions : five years on’ in which I discussed OU work in this area. There was a lot of interest in what I said, especially concerning evaluation findings. However I wanted to get a discussion going on the reasons why more people don’t use assessment items of this type, and this didn’t really happen. So I’m trying again here. (My CAA 2012 paper is at Open Research Online if you want more background information.)

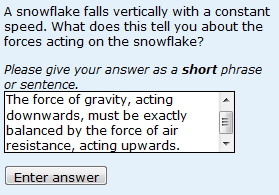

What might be the reasons for the lack of widespread use of short-answer free-text questions? People have suggested that students might not like questions of this type or might find them difficult. I have evidence that implies that neither of these things is generally true. So is the marking sufficiently accurate? Yes, it is – the point that most people seemed to take away from my presentation is that computers mark at least as accurately as human markers. I’m reported this before and others have reported on the lack of accuracy in human marking of GCSE and A level papers, so it should no longer surprise us. But it does. Similarly, people remain surprised that PMatch‘s relatively simple answer matching is as effective as more sophisticated answer matching. In summary, I’d want to be careful before using PMatch for high-stakes summative e-assessment questions (but in similar fashion, I will never again be able to have great confidence in outcomes determined by human markers) but for low stakes use it is fine…

Provided that is, that answer matching is developed iteratively using marked responses from real students. Most of the problems I have encountered have not been to do with particularly sophisticated or borderline responses but rather with synonyms that I simply hadn’t thought out. The number of responses needed in order to develop sufficiently accurate answer matching varies from question to question, but it is usually at least 200 responses. This means two things:

1. Developing the answer matching rules and amending them is a time-consuming activity. True. I was only able to do this work because I was fortunate to have a CETL-funded teaching fellowship. And, at the OU, with large student numbers (approx. 4000 per year for the module in question) and an ability to re-use questions from year to year, time spent in the early years of a module is recouped later. Machine learnining offers the potential to take some of the drudgery out of developing the answer matching rules – but an ‘expert’ human marker has to mark them in the first place (or you could get several markers to mark the responses, and then consider situations in which the human markers disagree).

2. If you simply don’t have the student numbers in order to gather a large number of responses, you’ve got a problem. True. We chose to gather responses online (because we were not sure that responses to questions asked on paper would be sufficiently similar) but I think responses gathered on paper would probably be sufficiently similar in order to develop answer matching, and other people have done this. It would also be OK to offer a question as an ‘add on’ for a small number of students, and to gather sufficient responses until you felt sufficiently confident to ‘use it in anger’. However, in my opinion, the ultimate limitation to the use of short-answer free-text questions is not having sufficient marked responses.

This leads to a research question that I don’t think anyone has addressed, relevant to the CAA Conference’s theme of sharing questions: do students in different universities give sufficiently similar responses to enable questions that we have developed in one organisation, on the basis of thousands of responses from their students, to be used elsewhere?

Dear Sally,

Thanks for this post.

I was wondering if the limited uptake also has to do with two other things.

1

How easy do teachers in higher education outside of the OU have access to your system? I know it is very difficult to have another (or extra) CAA system be set up in a University setting….

2

How does the free-text answering method apply to other area’s than the physics area? Do you have examples for for example Law, History and the like?

Kind regards,

Silvester

Hi Silvester

I think these are both good points. PMatch is now available as Moodle question type, so hopefully more people will have access to the software in the future. However, it will remain true that questions of this type only work well when there are distinct right and wrong answers.

Many thanks for your comment.

best wishes

Sally

Dear Sally,

Do you have experience with the system offered by http://www.intelligentassessment.com/author.htm I understood that this company developed the software that the OU integrated in Moodle? Or is it native Moodle functionality?

Kind regards,

Silvester

Dear Silvester

We initially used the IAT software and we were very impressed. However we switched to PMatch (which is much simpler and is our own product) when we discovered that it gave answer matching that was just as good. See http://www.open.ac.uk/blogs/SallyJordan/?p=176. There is more information on this in my CAA 2012 paper (which has not yet appeared either on Open Research Online or on the CAA website; if neither have appeared by the end of the week I will send you a copy). See also:

Butcher, P.G. & Jordan, S.E. (2010). A comparison of human and computer marking of short free-text student responses. Computers & Education, 55, 489-499.

Jordan, S. & Mitchell, T. (2009). E-assessment for learning? The potential of short-answer free-text questions with tailored feedback. British Journal of Educational Technology, 40(2), 371-385.

I will post a bit more about PMatch later today or tomorrow.

best wishes

Sally

Hello again Silvester. My paper from CAA 2012 has now appeared on Open Research Online – http://oro.open.ac.uk/view/person/sej3.html

best wishes

Sally