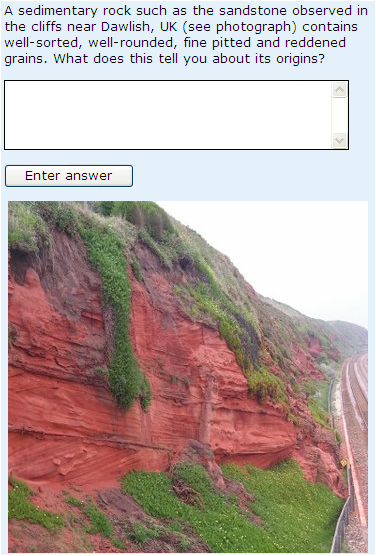

One of the general findings that is coming out of my evaluation of student responses to multi-try e-assessment questions relates to that wonderful thing that I’ll call the ‘Law of unintended consequences’. I used to think that ‘students don’t read assessment questions’, but actually they do. It’s just that they don’t always interpret questions in the way that you (the author) had intended; sometimes students read things into the questions that you didn’t intend to say. The same is true of feedback; sometimes you are giving a message that you didn’t intend to give. All of this is true for assessment of all types, but I’ll talk you through some of the issues I have discovered in the context of one of our short-answer free-text questions. The original wording of this question is shown below:

I thought it was a lovely question and I’m always pleased to be able to use a holiday snap! So what was the problem?

1. Too little information. We didn’t tell people what form of answer was expected (though this was included in the general instructions and if the student response was incorrect at the first attempt they were told to have another go, remembering to write their answer ‘as a simple sentence’) and we didn’t tell them how long their answer should be.

2. Too much information. Students were supposed to work out the answer from the information given in the question but sadly a number Googled ‘Dawlish’ and came across a very helpful(?) Devon County Council website describing the geology of the area.

The result of all of this was a few ridiculously long answers, some of which (even if they included the correct answer) clearly didn’t show understanding of the link between rock colour and texture and the environment in which it was formed. Here’s a response from a student, describing the formation of the sandstone shown and described in the question, but also describing the formation of the underlying breccia:

This shows imbedded aeolian (windblown) sands and fluvial (water laid) breccias. Patterns of cross bedding in the sandstone shows where dunes were partly eroded and then overlain by others. This more angular nature of the breccias indicates that they were deposited by sheet flash floods and are multi storey indicating repeated flooding impact. The change from aeolian sands to the fluvial breccias may have resulted from an increase in rainfall associated with climate change at the end of the lower Permian.

To deal with these problems, we removed the picture and the reference to Dawlish. We also set a filter to limit responses to no more than 20 words, and added the advisory wording shown below.

You can probably guess what happened. This change of wording dealt with the very long answers and the answers that told us everything to do with the geology of Dawlish, rather than working out the answer from the information provided. However, in the same way as described in my posting ‘How long is short?’, the average length of the responses actually increased – students had interpreted the instruction that their responses should be no more than 20 words to mean that their responses should be nearly 20 words.

So the next stage was to retain the filter but to alter the advisory warning as shown below. This is the wording currently in use; students who give a response that is too long are told to reduce the length to no more than 20 words and are given a ‘free’ go.

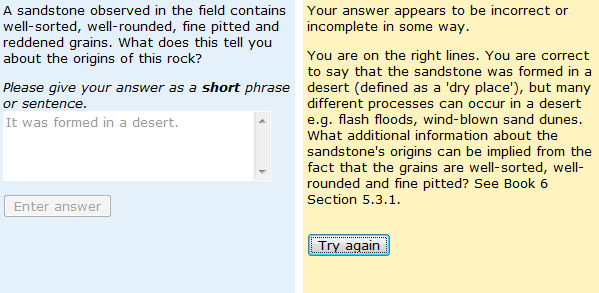

Surely, that was the end of the story? Not so. We knew that the answer matching accuracy for this question was particularly good, but became aware that a lot of students were unhappy. The problem was that many students gave a first attempt response along the lines of ‘It was formed in a desert’. The academic author of the question felt that this was not a sufficiently precise answer – he also wanted students to explain that grains must have been carried by air (the evidence for this is in the description of the grains given in the question). The problem was that students, probably thinking of sand-dunes and forgetting that you can get flash floods in deserts, assumed that their answer implied both dry and air-carried grains. You can imagine the scenario:

Student types (first attempt): It was formed in a desert

Feedback from computer: Your answer appears to be incorrect or incomplete in some way. Have another go, remembering to express your answer as a simple sentence.

Student thinks: Stupid computer! Student types (second attempt) : It was formed in a desert i.e. a dry and windy place.

Response marked as correct. Student thinks: STUPID COMPUTER!

The problem was that the ‘general’ first attempt feedback was just not good enough on this occasion. Thankfully, we have been able to introduce targeted feedback for partially correct responses into this and other similar questions, as shown below:

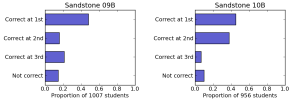

This has had a spectacular impact on student attitude to this question, on their attitude to short-answer free-text questions in general and also on their success at second attempt. The figure below compares the proportion of students who were correct at first, second and third attempt, or not at all, for uses of the question before (LHS) and after (RHS) targeted feedback was added for partially correct first-attempt responses.

There is also some evidence that the generic response after an unsuccesful first attempt is causing students to believe that the computer has failed to understand their answer, rather than the more accurate message that their answer is fundamentally wrong. So the work goes on. Clearly the detailed wording of questions and feedback is extremely important.

Hi Sally,

Just wanted to let you know that I find your posts about the importance of question wording and feedback extremely useful. A must read for everyone that is involved in the construction of e-assessments if you ask me.

So, thank you and keep up the good work!

Thanks Sander!

Sally

Pingback: e-assessment (f)or learning » Blog Archive » More on feedback

Pingback: e-assessment (f)or learning » Blog Archive » Does a picture paint a thousand words?